From Notebook to Deployed

AI lowered the barrier to building. The thinking is still ours.

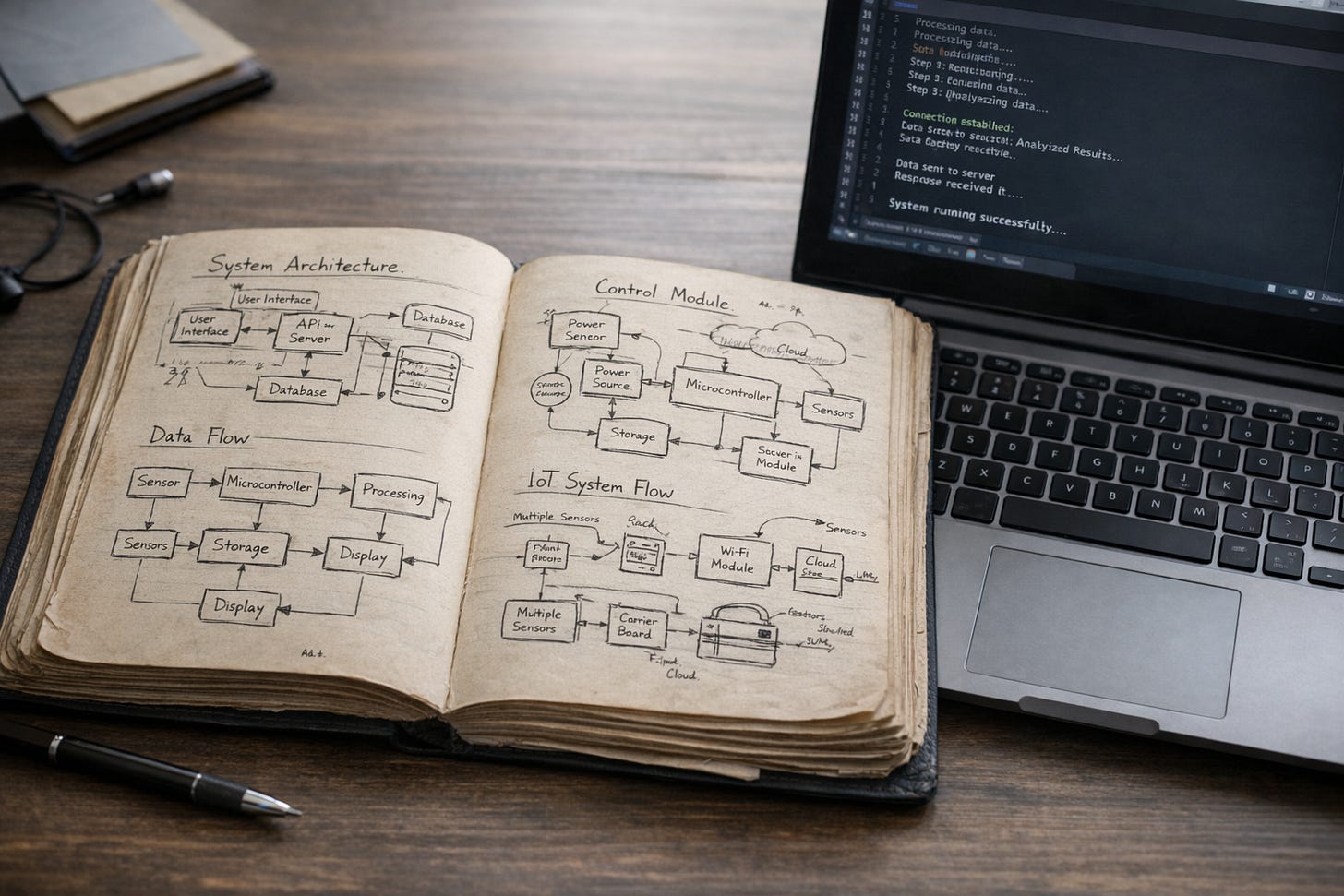

Over a decade ago, I had an idea for a cab app tailored to the Philippine context. I believed in it enough to fly a full-stack developer from Manila to Singapore to build it with me. I hosted the developer at my place. We spent days whiteboarding flows, debating features, sketching user journeys, and gradually wiring pieces together. It was fun and intense; also expensive and slow. Weeks passed before we had something that looked like a working prototype.

That experience truly left a lasting impression on me. Shipping an idea required time, money, coordination, and the right technical depth. Most experimental ideas don’t justify that level of effort. So they sit in notebooks or in conversations because the overhead of productizing them outweighs the curiosity.

For most of my career, I never had trouble with ideas or analytical execution. I can build models, write code, and think through systems from first principles. The typical blocker was more practical, turning those designs into real, deployed applications without assembling a full engineering team.

I could simulate almost anything in Python or C++. I could reason through edge cases and trade-offs. But building something end-to-end, with orchestration, interfaces, persistent storage, logging, authentication, and all the invisible plumbing that makes software usable outside a notebook, is a different layer of work.

Over the past few months, that friction has dropped.

Using Claude Code and Cursor, I built several production-level systems. One runs locally powered small language models for governance and assurance. It’s an agentic setup with several orchestrators coordinating scoped agents, validation layers, and feedback loops. A few years ago, I would have designed this on paper and maybe simulated parts of it. But I would not have deployed it myself.

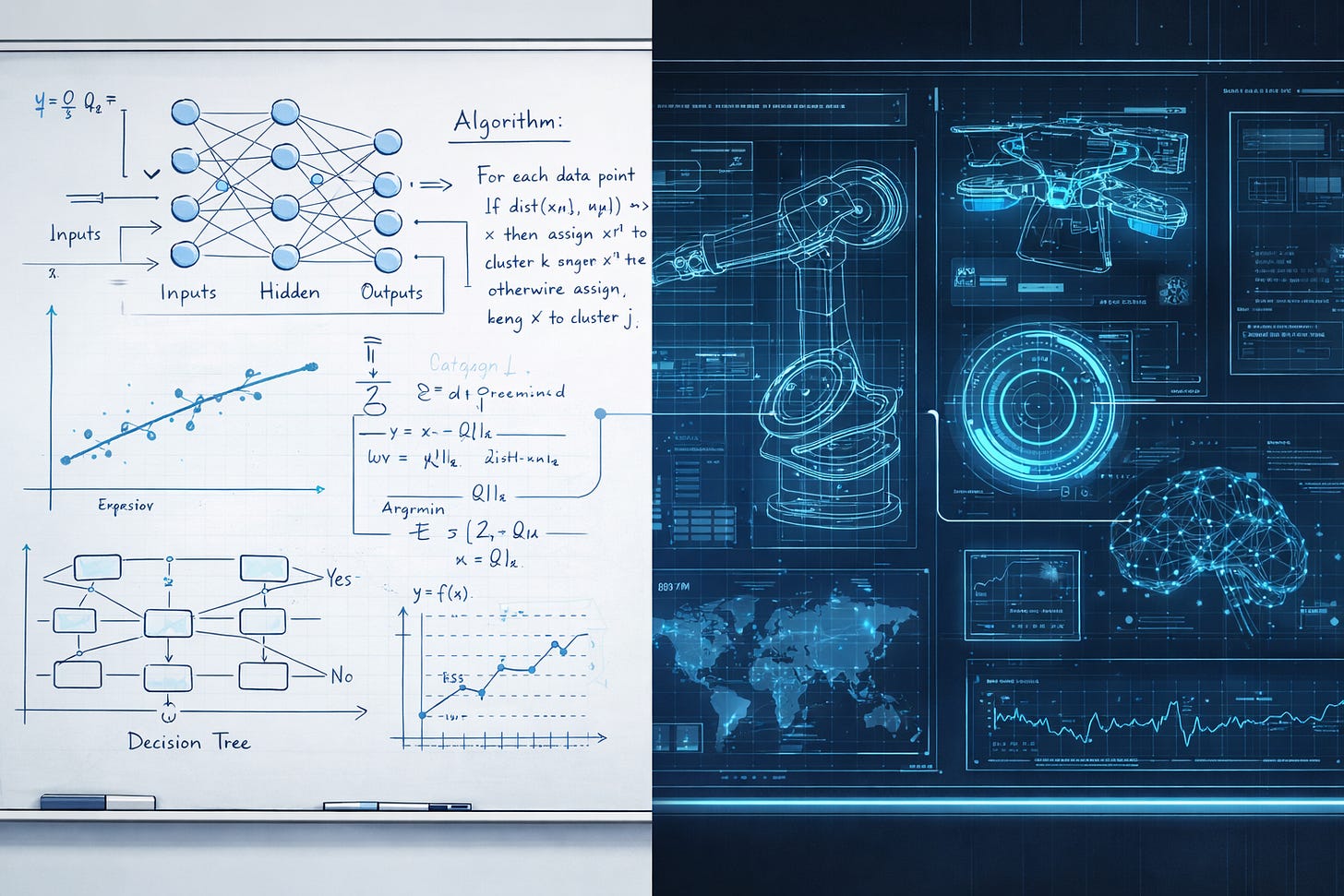

The models generated much of the code. They scaffolded services, wired components, and refactored quickly. What they did not do was decide how the system should be structured. The architectural decisions remained mine, especially the decision to decompose the system into agents.

That choice came from constraint. I didn’t want, and realistically couldn’t support, a heavy monolith. Compute was limited. Also, I wanted behavior that was easier to reason about and easier to contain if something went wrong. Breaking the system into narrowly scoped agents with explicit coordination made it more manageable. The optimization came from thinking through how the system should behave under pressure.

There was a moment in one of these builds that made this very clear.

The platform was already highly customized and fully interactive. The interface was smooth. The dashboards were clean. Reports were generated instantly. It could flag issues, surface patterns, and recommend actions. Visually and functionally, it looked complete. Even the numbers appeared coherent and authoritative. To a client, it would have inspired confidence.

And yet, something about the outputs felt off to me.

The results were internally consistent. No, nothing was crashing. Nothing looked obviously broken. But after years of manually working through similar analyses, the patterns felt slightly misaligned to me. It was subtle. Without that accumulated mathematical intuition, it would have been easy to accept the results at face value.

So I went back through the computations step by step. The issue wasn’t in the code's mechanics. It was in the assumptions guiding it. Some parameters had been inferred rather than explicitly defined. The structure held together, but it was drifting from the model’s true intent.

Correcting it meant tightening the formulation, rewriting parts of the logic, and explicitly specifying the assumptions and algorithms, rather than leaving room for interpretation. Once clarified, the outputs changed. They aligned with what the analysis was actually meant to represent.

That experience reinforced something simple. A polished interface and functioning code are not the same as a sound analytical foundation. Systems can produce plausible structure and persuasive outputs while resting on underspecified foundations. Precision in assumptions remains the designer's responsibility.

Because my work is closely tied to governance and assurance, I design with guardrails from the start. Who can act autonomously. What requires validation. Where authority escalates. What gets logged. In an agentic system, those choices directly shape outcomes. Allowing agents to act means encoding decision rights in software.

I’ve written before about how innovation and governance need to move together inside institutions and how boards should demand clarity and proportionality in AI oversight. The same thinking applies here. Structure determines whether a system remains understandable as it grows.

What surprised me was how much the constraints actually helped. Limited resources pushed the system toward decomposition. Limited tolerance for ambiguity encouraged more careful validation. The architecture ended up cleaner and more modular because it had to be.

The systems we built are running and doing real work. I find that satisfying. The part worth being careful about is the gap between experimenting on your own infrastructure and deploying something that affects real users, regulatory exposure, or institutional risk. At that point, experienced engineers, proper security reviews, and operational rigor matter. Generative AI lowers the barrier to building; it does not change what responsible deployment requires.

The feedback loop is shorter now. Ideas that used to stay abstract are running systems I can test and refine. I don’t have to wait weeks or assemble a team just to see if something works. What the models can’t do is think through the problem for you. The system is only as good as the thinking that went into it, and that part hasn't changed.